Week 3: Exercises#

The exercises are intended to be done by hand unless otherwise stated (such as when you are asked to plot a graph or run a script).

Exercises – Long Day#

from sympy import*

from dtumathtools import*

init_printing()

def inner(x1, x2):

'''

Computes the standard inner product of two vectors.

'''

return x1.dot(x2, conjugate_convention = 'right')

1: Orthonormal Basis (Coordinates)#

Question a#

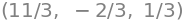

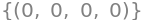

Do the vectors

constitute an orthonormal basis in \(\mathbb{R}^3\)?

Hint

The vectors need to be pairwise orthogonal, meaning they must be perpendicular to each other. How do we check for that?

Hint

Two vectors are orthogonal, precisely when their scalar product (dot product) is 0. But more is needed for them to be orthonormal.

Hint

A basis is orthonormal if the vectors are mutually orthogonal and each of them has a length (norm) of 1.

Answer

\(\langle \pmb{u}_1, \pmb{u}_2 \rangle = \langle \pmb{u}_1, \pmb{u}_3 \rangle = \langle \pmb{u}_2, \pmb{u}_3 \rangle = 0\) and all three vectors have a norm of 1, so the three vectors constitute an orthonormal basis for \(\mathbb{R}^3\).

Question b#

Consider the vector \(\pmb{x} = [1,2,3]^T\). Calculate the inner products \(\langle \pmb{x}, \pmb{u}_k \rangle\) for \(k=1,2,3\).

Hint

These are just the usual dot products \(\pmb{x} \cdot \pmb{u}_k\).

Question c#

Let’s denote the basis by \(\beta = \pmb{u}_1, \pmb{u}_2, \pmb{u}_3\). State the coordinate vector \({}_{\beta} \pmb{x}\) of \(\pmb{x}\) with respect to \(\beta\). Calculate the norm of both \(\pmb{x}\) and the coordinate vector \({}_{\beta} \pmb{x}\).

u1 = Matrix([1,2,2])/3

u2 = Matrix([2,1,-2])/3

u3 = Matrix([2,-2,1])/3

x = Matrix([1,2,3])

inner(x, u1), inner(x, u2), inner(x, u3)

# Calculated in the previous question. Check:

U = Matrix.hstack(u1, u2, u3) # Change-of-basis matrix from the orthonormal beta basis til the standard basis

e_x = x

beta_x = U.T * e_x # U is unitary, so U^T = U^(-1) is the change-of-basis matrix from the standard basis to the orthonormal beta basis

beta_x

# Another check

inner(x, u1) * u1 + inner(x, u2) * u2 + inner(x, u3) * u3

x.norm(), beta_x.norm()

Since \(U^T\) is unitary, the norm is kept by all vectors.

Question d#

Create the \(3\times 3\) matrix \(U = [\pmb{u}_1 \vert \pmb{u}_2 \vert \pmb{u}_3]\) with \(\pmb{u}_1, \pmb{u}_2, \pmb{u}_3\) as its three columns. Calculate \(U^T \pmb{x}\) and compare the result with that from the previous question.

2: Orthonormal Basis (Construction)#

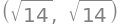

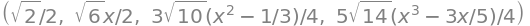

Create an orthonormal basis for \(\mathbb{R}^3\), within which

is the first basis vector.

Hint

You can, for example, start by guessing on a unit vector that is perpendicular to the given vector.

Hint

What about \(\left(0,0,1\right)\)?

Hint

Can you guess one more? Simply start by finding a vector that is perpendicular to both the given vector and \(\left(0,0,1\right)\).

Hint

What about \(\left(1,-1,0 \right)\)? What are we still missing now?

Hint

\(\left(1,-1,0 \right)\) needs to be normalized.

Answer

A suggestion for an orthonormal basis is

but there are other possibilities! Think about why that is the case.

3: Orthonormalization#

Find the solution set to the homogeneous equation

and justify that it constitutes a subspace in \(\mathbb{R}^3\). Find an orthonormal basis for this solution space.

Hint

If we are to find an orthonormal basis for the solution space, we first need to find a basis for the solution space, meaning we must solve the equation.

Hint

Let \(x_2=t_1\) and \(x_3=t_2\).

Hint

With \(x_2=t_1\) and \(x_3=t_2\) we find that

How do we form a basis for this solution space?

Hint

The solution space is \(\operatorname{span}\lbrace(-1,1,0),(-1,0,1)\rbrace\), so a basis can be formed by the vectors

Now they just need orthonormalization.

Hint

When two vectors need to be orthonormalized, we must first rotate them relative to each other within the space they span so that they become orthogonal (perpendicular to each other) while still spanning the same space. Secondly, they must be normalized, meaning they must either be lengthened or shortened so that they all have a length of 1.

Hint

Fortunately, we can simply carry this out by following the Gram-Schmidt procedure.

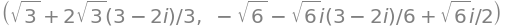

Answer

An orthonormal basis for the solution space of the equation is formed by the vectors

x1, x2, x3 = symbols('x1 x2 x3')

eqn = Eq(x1 + x2 + x3, 0)

A, b = linear_eq_to_matrix(eqn, [x1, x2, x3])

# linsolve((A, b), x1, x2, x3) # alternativt

A.nullspace()

v1, v2 = A.nullspace()

u1 = v1/v1.norm()

w = v2 - inner(v2, u1) * u1

u2 = w/w.norm()

u1, u2

# With Sympy

GramSchmidt([v1, v2], orthonormal = True)

4: Orthogonal Projections#

Let \(\boldsymbol{y}=(2,1,2) \in \mathbb{R}^3\) be given. Then the projection of \(\boldsymbol{x} \in \mathbb{R}^3\) on the line \(Y = \operatorname{span}\{\boldsymbol{y}\}\) is given by:

where \(\boldsymbol{u} = \frac{\boldsymbol{y}}{||\boldsymbol{y}||}\).

Question a#

Let \(\boldsymbol{x} = (1,2,3) \in \mathbb{R}^3\). Calculate \(\operatorname{Proj}_Y(\boldsymbol{x})\), \(\operatorname{Proj}_Y(\boldsymbol{y})\) and \(\operatorname{Proj}_Y(\boldsymbol{u})\).

Question b#

As usual, we consider all vectors as column vectors. Now, find the \(3 \times 3\) matrix \(P = \pmb{u} \pmb{u}^T\) and compute both \(P\boldsymbol{x}\) and \(P\boldsymbol{y}\).

y = Matrix([2,1,2])

x = Matrix([1,2,3])

u = y.normalized()

u, inner(x, u) * u, inner(y, u) * u, inner(u, u) * u

# Note that y (and u) already belong to the line, so y = proj_Y(y)

y == inner(y, u) * u

True

P = u * u.T

P, P * x, P * y

# P is a projection matrix, so P^2 = P

P * P == P

True

5: An Orthonormal Basis for a Subspace of \(\mathbb{C}^4\)#

Question a#

Find an orthonormal basis \(\pmb{u}_1, \pmb{u}_2\) for the subspace \(Y = \operatorname{span}\{\pmb{v}_1, \pmb{v}_2\}\) that is spanned by the vectors:

v1 = Matrix([I, 1, 1, 0])

v2 = Matrix([0, I, I, sqrt(2)])

Hint

You can find it using the Gram-Schmidt procedure. Check your result with the Sympy command GramSchmidt([v1,v2], orthonormal=True).

Question b#

Let

Calculate \(\langle \pmb{x}, \pmb{u}_1 \rangle\), \(\langle \pmb{x}, \pmb{u}_2 \rangle\) as well as

What does this linear combination give? Does \(\pmb{x}\) belong to the subspace \(Y\)?

x = 3 * v1 - 2 * v2 # This is how x is constructed in the exercise

x

u1 = v1.normalized()

w = v2 - inner(v2, u1) * u1

u2 = w.normalized()

w.norm(), u1, u2

inner(x, u1), inner(x, u2)

expand(inner(x, u1)), expand(inner(x, u2))

expand(inner(x, u1) * u1 + inner(x, u2) * u2)

\(\pmb{x}\) belongs to \(Y\) since \(\pmb{x}\) can be written as a linear combination of \(\pmb{u}_1\) and \(\pmb{u}_2\).

6: A Python Algorithm#

Question a#

Without running the following code, explain what it does and explain what you expect the output to be. Then run the code in a Jupyter Notebook.

from sympy import *

from dtumathtools import *

init_printing()

x1, x2, x3 = symbols('x1:4', real=True)

eqns = [Eq(1*x1 + 2*x2 + 3*x3, 1), Eq(4*x1 + 5*x2 + 6*x3, 0), Eq(5*x1 + 7*x2 + 8*x3, -1)]

eqns

A, b = linear_eq_to_matrix(eqns,x1,x2,x3)

T = A.row_join(b) # Augmented matrix

A, b, T

Question b#

We will continue the Jupyter Notebook by adding the following code (as before, do not run it yet). Go through the code by hand (think through the for loops). What \(T\) matrix will be the result? Copy-paste the code into an AI tool, such as https://copilot.microsoft.com/ (log in with your DTU account), and ask it to explain the code line by line. Verify the result by running the code in a Jupyter Notebook. Remember that T.shape[0] gives the number of rows in the matrix \(T\).

for col in range(T.shape[0]):

for row in range(col + 1, T.shape[0]):

T[row, :] = T[row, :] - T[row, col] / T[col, col] * T[col, :]

T[col, :] = T[col, :] / T[col, col]

T

Question c#

Write Python code that ensures zeros above the diagonal in the matrix \(T\) so that \(T\) ends up in reduced row-echelon form.

Note

Do not take into account any divisions by zero (for general \(T\) matrices). We will here assume that the computations are possible.

Hint

You will need two for loops, such as:

for col in range(T.shape[0] - 1, -1, -1):

for row in range(col - 1, -1, -1):

Remember that index \(-1\) in Python gives the last element.

Hint

Ask an AI tool, such as https://copilot.microsoft.com/ (log in with your DTU account), for help if you get stuck. You should, though, first attempt to work on the exercise on your own.

Answer

# Backwards Elimination: Create zeros above the diagonal

for col in range(T.shape[0] - 1, -1, -1):

for row in range(col - 1, -1, -1):

T[row, :] = T[row, :] - T[row, col] * T[col, :]

T

Question d#

What kind of algorithm have we implemented? Test the same algorithm on:

x1, x2, x3, x4 = symbols('x1:5', real=True)

eqns = [Eq(1*x1 + 2*x2 + 3*x3, 1), Eq(4*x1 + 5*x2 + 6*x3, 0), Eq(4*x1 + 5*x2 + 6*x3, 0), Eq(5*x1 + 7*x2 + 8*x3, -1)]

A, b = linear_eq_to_matrix(eqns,x1,x2,x3,x4)

T = A.row_join(b) # Augmented matrix

Answer

This is the Gaussian elimination procedure for solving linear equation systems (review the topic in the Mathematics 1a textbook). The output is the reduced row-echelon form of the input matrix. We have not taken column switches (nor row switches) into account and thus there is a risk of division by zero if the first \(n\) columns of an \(n \times m\) matrix \(T\) are not linearly independent.

7: Orthogonal Polynomials#

This is an exercise from the textbook. You can find help there.

Consider the list \(\alpha=1,x,x^{2},x^{3}\) of polynomials in \(P_{3}([-1,1])\) equipped with the \(L^{2}\) inner product.

Question a#

Argue that \(\alpha\) is a list of linearly independent vectors.

Hint

The assumption is that \(c_1 + c_2 x + c_3 x^2 + c_4 x^3 = 0\) for all \(x \in [-1,1]\). We will show that \(c_1 = c_2 = c_3 = c_4 = 0\), since that will imply that the polynomials in \(\alpha\) are linearly independent.

Hint

Choose four different \(x\) values and evalute the assumption for those. Then we get four equations in the four unknowns \(c_1, c_2, c_3, c_4\). The \(x\) values must be chosen such that the equation system only has the zero solution. (Why?)

Hint

We choose fx \(x = 0, 1, -1, 1/2\). This gives the following equations: \(c_{1} = 0, c_{1} + c_{2} + c_{3} + c_{4} = 0, c_{1} - c_{2} + c_{3} - c_{4} = 0, c_{1} + \frac{c_{2}}{2} + \frac{c_{3}}{4} + \frac{c_{4}}{8} = 0\).

Hint

Check that the four equations only have the zero solution. Conclude that the four polynomials are linearly independent.

The students are probably not used to working with linearly independent functions. And they likely don’t know, for example, that the monomial basis is linearly independent. This follows, of course, from “The fundamental theorem of algebra”, taught in Math1a (although it was not proven). The task here can be solved with the following steps:

x = symbols('x', real=True)

c1,c2,c3,c4 = symbols('c1:5', real=True)

v1 = 1

v2 = x

v3 = x**2

v4 = x**3

eq1 = Eq(c1*v1 + c2*v2.subs(x,0) + c3*v3.subs(x,0) + c4*v4.subs(x,0),0)

eq2 = Eq(c1*v1 + c2*v2.subs(x,1) + c3*v3.subs(x,1) + c4*v4.subs(x,1),0)

eq3 = Eq(c1*v1 + c2*v2.subs(x,-1) + c3*v3.subs(x,-1) + c4*v4.subs(x,-1),0)

eq4 = Eq(c1*v1 + c2*v2.subs(x,S(1)/2) + c3*v3.subs(x,S(1)/2) + c4*v4.subs(x,S(1)/2),0)

eq1, eq2, eq3, eq4

A,b = linear_eq_to_matrix([eq1,eq2,eq3,eq4],c1,c2,c3,c4)

linsolve((A,b),c1,c2,c3,c4)

Question b#

Apply the Gram-Schmidt procedure on \(\alpha\) and show that the procedure yields a normalized version of the Legendre polynomials.

Hint

For calculating the norms and the inner products, first determine the integrals by hand. Afterwards, use the integrate command in Sympy with code lines such as x = symbols('x', real=True) and integrate(x**2, (x,-1,1)) to check your work.

Hint

Check your manual calculation of the polynomial \(u_1\) with Sympy:

x = symbols('x', real=True)

v1 = 1

v2 = x

v3 = x**2

v4 = x**3

v1_norm = sqrt(integrate(v1**2, (x,-1,1)))

u1 = v1/v1_norm

u1

Hint

Check your manual calculation of the polynomial \(u_2\) with Sympy:

w2 = v2 - integrate(v2*u1, (x,-1,1)) * u1

u2 = w2/sqrt(integrate(w2**2, (x,-1,1)))

u2

Hint

Check your manual calculation of the polynomial \(u_3\) with Sympy:

w3 = v3 - integrate(v3*u1, (x,-1,1)) * u1 - integrate(v3*u2, (x,-1,1)) * u2

u3 = w3/sqrt(integrate(w3**2, (x,-1,1)))

Hint

Check your manual calculation of the polynomial \(u_4\) with Sympy:

w4 = v4 - integrate(v4*u1, (x,-1,1)) * u1 - integrate(v4*u2, (x,-1,1)) * u2 - integrate(v4*u3, (x,-1,1)) * u3

u4 = w4/sqrt(integrate(w4**2, (x,-1,1)))

Answer

The answer is obtained by running the code as given in the above hints. Compare the result with legendre(0,x), legendre(1,x), legendre(2,x).factor() and legendre(3,x).factor(). They should differ by a scaling factor.

v1_norm = sqrt(integrate(v1**2, (x,-1,1)))

u1 = v1/v1_norm

u1

w2 = v2 - integrate(v2*u1, (x,-1,1)) * u1

u2 = w2/sqrt(integrate(w2**2, (x,-1,1)))

w3 = v3 - integrate(v3*u1, (x,-1,1)) * u1 - integrate(v3*u2, (x,-1,1)) * u2

u3 = w3/sqrt(integrate(w3**2, (x,-1,1)))

w4 = v4 - integrate(v4*u1, (x,-1,1)) * u1 - integrate(v4*u2, (x,-1,1)) * u2 - integrate(v4*u3, (x,-1,1)) * u3

u4 = w4/sqrt(integrate(w4**2, (x,-1,1)))

u1, u2, u3, u4

# This is a normalized version of the Legendre polynomials:

legendre(0,x), legendre(1,x), legendre(2,x).factor(), legendre(3,x).factor()

Exercises – Short Day#

1: Matrix Multiplications#

Define

Let \(\pmb{a}_1, \pmb{a}_2,\pmb{a}_3,\pmb{a}_4\) denote the columns in \(A\). Let \(\pmb{b}_1, \pmb{b}_2,\pmb{b}_3\) denote the rows in \(A\). We now calculate \(A\pmb{x}\) in two different ways.

Question a#

Method 1: As a linear combination of the columns. Calculate the linear combination

Question b#

Method 2: As “dot product” of the rows in \(A\) with \(x\). Calculate

Note

Since \(\pmb{b}_k\) is a row vector, \((\pmb{b}_k)^T\) is a column vector. Hence, the product \(\pmb{b}_k \pmb{x}\) corresponds to the dot product of \(\pmb{x}\) and \((\pmb{b}_k)^T\).

Question c#

Calculate \(A\pmb{x}\) in Sympy and compare with your calculations from the previous questions.

A = Matrix([[1,2,3,4],[5,6,7,8],[4,4,4,4]])

a1, a2, a3, a4 = A[:,0], A[:,1], A[:,2], A[:,3]

b1, b2, b3 = A[0,:], A[1,:], A[2,:]

x = Matrix([1,2,-1,1])

A*x, x[0]*a1 + x[1]*a2 + x[2]*a3 + x[3]*a4, b1.dot(x), b2.dot(x), b3.dot(x)

2: A Subspace in \(\mathbb{C}^4\) and its Orthogonal Compliment#

Let the following vectors be given in \(\mathbb{C}^4\):

A subspace \(Y\) in \(\mathbb{C}^4\) is determined by \(Y=\operatorname{span}\lbrace\pmb{v}_1,\pmb{v}_2,\pmb{v}_3,\pmb{v}_4\rbrace\).

Question a#

v1 = Matrix([1,1,1,1])

v2 = Matrix([3*I,I,I,3*I])

v3 = Matrix([2,0,-2,4])

v4 = Matrix([4-3*I,2-I,-I,6-3*I])

Run the command GramSchmidt([v1,v2,v3,v4], orthonormal=True) in Python. What does Python tell you?

# GramSchmidt([v1, v2, v3, v4], orthonormal = True)

Question b#

Now show that \((\pmb{v}_1,\pmb{v}_2,\pmb{v}_3)\,\) constitutes a basis for \(Y\), and find the coordinate vector of \(\pmb{v}_4\,\) with respect to this basis.

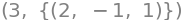

Answer

The vectors \(\pmb{v}_1\), \(\pmb{v}_2\) and \(\pmb{v}_3\) are linearly independent, while \(\pmb{v}_4=2\pmb{v}_1-\pmb{v}_2+\pmb{v}_3\), so

is the coordinate vector with respect to basis \(v\).

A = Matrix.hstack(v1, v2, v3)

A.rank(), linsolve((A, v4))

Question c#

Provide an orthonormal basis for \(Y\).

Hint

The vectors \(\pmb{v}_1\), \(\pmb{v}_2\) and \(\pmb{v}_3\) constitute a basis for \(Y\), so they just need to be orthonormalized, for example with the use of the Gram-Schmidt procedure.

Answer

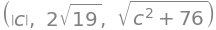

The vectors

constitute an orthonormal basis for \(Y\).

u1, u2, u3 = GramSchmidt([v1, v2, v3], orthonormal = True)

u1, u2, u3

Question d#

Determine the coordinate vector of \(\pmb{v}_4 \in Y\) with respect to the orthonormal basis for \(Y\).

Hint

This can be calculated with inner products (as we are used to by now) or with a fitting matrix-vector product.

Umat = Matrix.hstack(u1, u2, u3)

simplify(Umat.adjoint() * v4 )

Question e#

Determine the orthogonal complement \(Y^\perp\) in \(\mathbb{C}^4\) to \(Y\).

Hint

First, guess a vector and check if your guess is located in \(Y\). Meaning, check that it is linearly independent to a basis for \(Y\).

Hint

You can, for example, try the guess \((1,0,0,0)\).

Hint

A vector that belongs to the orthogonal complement is a vector that is orthogonal to the basis vectors in a basis for \(Y\).

Hint

Use the Gram-Schmidt procedure - continue from where you left off in the previous question b and just implement the vector you have just guessed. You do not need to normalize the vector in the last step, since it is enough to just orthogonalize. (Why?)

Answer

The orthogonal complement is a 1-dimensional subspace of \(\mathbb{C}^4\) spanned by

An Alternative Approach

It is not necessary to continue with the Gram-Schmidt procedure as described above. Here is another alternative approach that starts directly from the definition of the orthogonal complement: Let \(\boldsymbol=[c1,c2,c3,c4]^T\). Write the three orthogonality conditions as equations in \(c_i\):

Then solve this linear system of equations, and determine/choose a vector that spans the null space (the kernel).

Question f#

Choose a vector \(\pmb{y}\) in \(Y^\perp\) and choose a vector \(\pmb{x}\) in \(Y\). Calculate \(\Vert \pmb{x} \Vert\), \(\Vert \pmb{y} \Vert\) and \(\Vert \pmb{x} + \pmb{y} \Vert\). Check that \(\Vert \pmb{x} \Vert^2 +\Vert \pmb{y} \Vert^2 = \Vert \pmb{x} + \pmb{y} \Vert^2\).

*u_vectors, u_perp = GramSchmidt([v1, v2, v3, Matrix([1,0,0,0])], orthonormal = True)

u_perp

c = symbols('c', real=True)

y = c * u_perp # c=1 or c=2 are ok choices

x = v4 # Could do this more generally

y.norm(), x.norm(), (x+y).norm()

(x+y).norm()**2 == x.norm()**2 + y.norm()**2

True

3: Orthogonal Projection on a Plane#

Let the matrix \(U = [\pmb{u}_1, \pmb{u}_2]\) be given by:

Question a#

Show that \(\pmb{u}_1, \pmb{u}_2\) is an orthonormal basis for \(Y = \operatorname{span}\{\pmb{u}_1, \pmb{u}_2\}\).

Answer

By calculation, it is shown that \(\langle \pmb{u}_1, \pmb{u}_2 \rangle = 0\) and \(\Vert \pmb{u}_1 \Vert = \Vert \pmb{u}_2 \Vert = 1\). This shows that \(\pmb{u}_1, \pmb{u}_2\) is an orthonormal list of vectors. Therefore, this list is linearly independent and thus constitutes a basis for \(Y\). We conclude that \(\pmb{u}_1, \pmb{u}_2\) is an orthonormal basis for \(Y\).

Question b#

Let \(P = U U^* \in \mathbb{R}^{3 \times 3}\). As explained in this section of the textbook, this will give us a projection matrix that describes the orthogonal projection \(\pmb{x} \mapsto P \pmb{x}\), \(\mathbb{R}^3 \to \mathbb{R}^3\) on the plane \(Y = \operatorname{span}\{\pmb{u}_1, \pmb{u}_2\}\). Verify that \(P^2 = P\), \(P \pmb{u}_1 = \pmb{u}_1\), and \(P \pmb{u}_2 = \pmb{u}_2\).

Answer

From this it is easy to show that \(P \pmb{u}_1 = \pmb{u}_1\) and that \(P \pmb{u}_2 = \pmb{u}_2\). In fact it applies that \(P (c_1 \pmb{u}_1 + c_2 \pmb{u}_2) = c_1 \pmb{u}_1 + c_2 \pmb{u}_2\) for all choices of \(c_1, c_2 \in \mathbb{R}\).

Question c#

Choose a vector \(\pmb{x} \in \mathbb{R}^3\), that does not belong to \(Y\) and find its projection \(\operatorname{proj}_Y(\pmb{x})\) af \(\pmb{x}\) down on the plane \(Y\). Illustrate \(\pmb{x}\), \(Y\) and \(\operatorname{proj}_Y(\pmb{x})\) in a plot.

Answer

You can, for example, choose \(\pmb{x} = [1,2,3]^T\). You must then calculate \(\operatorname{proj}_Y(\pmb{x}) = P \pmb{x} = [2,0,2]^T\). Note that \([2,0,2]^T = 2\sqrt{2} \pmb{u}_2 \in Y\).

U = Matrix([[sqrt(3)/3, sqrt(2)/2], [sqrt(3)/3, 0], [-sqrt(3)/3, sqrt(2)/2]])

P = U * U.T

x = Matrix([1,2,3])

t1, t2 = symbols('t1 t2')

r = t1 * U[:,0] + t2 * U[:,1]

p = dtuplot.plot3d_parametric_surface(r[0], r[1], r[2], (t1, -5, 5), (t2, -5, 5), show = False, rendering_kw = {"alpha": 0.5})

p.extend(dtuplot.scatter((x, P*x), show = False))

p.xlim = [-5,5]

p.ylim = [-5,5]

p.zlim = [-5,5]

p.show()

Question d#

Show that \(\pmb{x} - \operatorname{proj}_Y(\pmb{x})\) belongs to \(Y^\perp\).

Hint

With the choice \(\pmb{x} = [1,2,3]^T\) we get \(\pmb{y} := \pmb{x} - \operatorname{proj}_Y(\pmb{x}) = [-1,2,1]^T\). You need to show that the \(\pmb{y}\) vector is orthogonal to all vectors in \(Y\).

Hint

An arbitrary vector in \(Y\) can be written as \(c_1 \pmb{u}_1 + c_2 \pmb{u}_2\), where \(c_1, c_2 \in \mathbb{R}\).

Hint

Show that \(\langle \pmb{y}, \pmb{u}_1 \rangle = 0\) and \(\langle \pmb{y}, \pmb{u}_2 \rangle = 0\).

Answer

Since \(\langle c_1 \pmb{u}_1 + c_2 \pmb{u}_2, \pmb{y} \rangle = c_1 \langle \pmb{u}_1 , \pmb{y} \rangle + c_2 \langle \pmb{u}_2 , \pmb{y} \rangle = 0 + 0 = 0\), we see that \(\pmb{y} = [-1,2,1]^T \in Y^\perp\).

v1 = Matrix([1,1,-1])

v2 = Matrix([1,0,1])

u1,u2 = GramSchmidt([v1, v2], orthonormal = True)

U = Matrix.hstack(u1, u2)

P = U * U.T

P, P * v1, P * v2

x = Matrix([1,2,3])

P * x

x - P * x

This is the orthogonal projection of \(\pmb{x}\) on \(Y^\perp\). A vector belongs to \(Y^\perp\) if and only if the inner product of \(u_1\) and \(u_2\) is zero.

4: Unitary Matrices#

Let a matrix \(F\) be given by:

n = 4

F = 1/sqrt(n) * Matrix(n, n, lambda k,j: exp(-2*pi*I*k*j/n))

F

State whether the following propositions are true or false:

\(F\) is unitary

\(F\) is invertible

\(F\) is orthogonal

\(F\) is symmetric

\(F\) is Hermitian

The columns in \(F\) constitute an orthonormal basis for \(\mathbb{C}^4\)

The columns in \(F\) constitute an orthonormal basis for \(\mathbb{R}^4\)

\(-F = F^{-1}\)

Answer

True

True

False

True

False

True

False

False

Note: This matrix is called the Fourier matrix. It fulfills for all \(n\) that: \(\overline{F} = F^* = F^{-1}\) and \(F = F^T\). The map \(\pmb{x} \mapsto F^* \pmb{x}\) is called the discrete Fourier transform and is important in many parts of engineering. See for example https://bootcamp.uxdesign.cc/the-most-important-algorithm-of-all-time-9ff1659ff3ef - Fast Fourier transform is the same map as \(\pmb{x} \mapsto F^* \pmb{x}\), just implemented in a smart way.

display(F.adjoint() * F == eye(n))

F.adjoint() * F

True

display(F.rank() == n)

F.inv()

True

F.T * F == eye(n)

False

F.is_symmetric()

True

F.adjoint() == F

False

True, False

(True, False)

-F == F.inv()

False

Note: \(F^{-1} = \overline{F}\).